Police forces across Canada are turning to artificial intelligence to predict future crimes with little oversight or regulation and inadequate consideration of the risks, according to a report from the University of Toronto’s Citizen Lab released today.

Citizen Lab research fellow Cynthia Khoo said that Canada is at a critical point in dealing with “algorithmic policing,” which uses data to predict not only where crimes might occur but who is likely to commit them.

The programs rely on data and algorithms to predict crimes in the same way social media companies use individuals’ data to target them with ads.

Khoo, a human rights lawyer, is co-author of Citizen Lab’s “To Surveil and Protect” report, which urgently calls for a federal judicial inquiry on the use of predictive technologies in police forces, a moratorium on their use until an inquiry is complete, and dramatic improvements in transparency.

The 193-page report, based on a national survey of police forces and analysis of the current and future use of AI, calls for police and oversight bodies to ensure marginalized communities are heard in considering use of the technology.

Khoo said there’s a high risk of bias when police use algorithms to predict future behaviour and events, and that can result in overpolicing of marginalized communities.

One issue is the data used in the process, she said, which relies heavily on historical police records of crimes, arrests and interactions with the public.

Past policing practices mean that marginalized groups — like people experiencing homelessness or racialized people — are over-represented in the data used due to interactions with police and government.

The report said this “data hyper-visibility” creates “feedback loops of unjustice” as communities are targeted for more policing, which in turn leads to a higher representation in police data. The data used can also reflect the biases of those creating the programs, the report says.

Fixing current data collection doesn’t address the issue, it notes.

That’s why the main recommendation is a government-mandated moratorium on police “use of technology that relies on algorithmic processing of historic mass police data sets.”

That moratorium should remain in place until a judicial inquiry assesses the technology and establishes rules for its use to ensure it meets “conditions of reliability, necessity, and proportionality.”

The Vancouver Police Department is an early adopter of both algorithmic police technology and safeguards to pre-empt possible criticisms around over-policing in vulnerable communities, the Citizen Lab report notes.

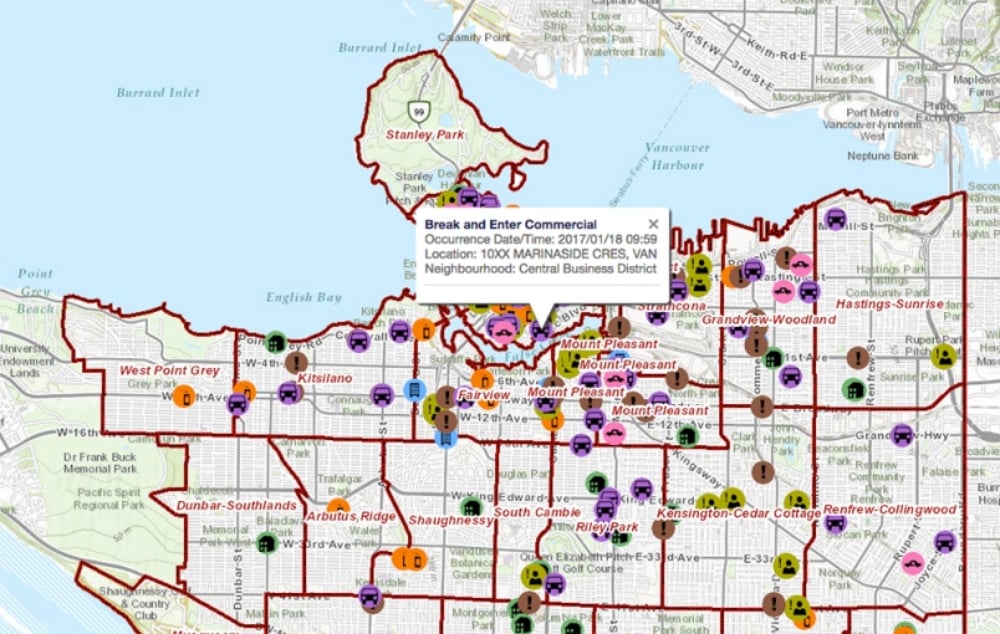

If you’ve ever heard of the department’s GeoDASH, it’s likely in reference to a handy interface that lets people examine crime maps and neighbourhood statistics.

But the GeoDASH Automated Policing System also delivers algorithmic policing capability. And the VPD’s pioneering use of it has been the subject of studies published as far away as the United Kingdom.

The Citizen Lab report looks at the department’s use of GeoDASH’s algorithmic features to analyze property crime statistics and predict where crimes are most likely to occur in future.

“After the system identifies high-risk locations, uniformed officers in marked cars are assigned to patrol these areas in order to deter criminal activity,” the report says. They look for “suspicious activity during their patrols and will engage in proactive activities, such as monitoring laneways and identifying potential POIs [persons of interest]/known offenders entering into the area.”

The department “may also notify civilian neighbourhood block watches in forecasted residential areas and ask them to exercise extra vigilance in their areas during the relevant time periods,” Vancouver Special Const. Ryan Prox told Citizen Lab. Prox heads the VPD unit that “pioneers and implements analytic programs and technology.”

The Vancouver Police Department has released a new online dashboard, intended to enhance community awareness of crime trends and to help prevent crime in Vancouver. Meet #GeoDASH! https://t.co/i1YmymtN2p #VPD #Community #CrimePrevention pic.twitter.com/FklQD9s2Vf

— Vancouver Police (@VancouverPD) December 4, 2018

The project, launched in 2016, was rated a success by the department, credited for a reduction in reported break-and-enter crimes.

Prox, who is also an adjunct professor of criminology at Simon Fraser University, did his PhD thesis on the pilot program.

He told Citizen Lab the VPD took care to reduce the risks of issues like overpolicing. “Exclusionary zones” such as the Downtown Eastside aren’t targeted with increased policing as a result of projections, he said.

The data used includes only community reported incidents, he said, not officer-discovered crime, to avoid amplifying any bias from existing police presence in neighbourhoods. Regular meetings with community policing units discuss any over-policing concerns.

Citizen Lab researchers were unable to confirm that the precautions were supported by written policies and training for officers.

The VPD also has so far rejected the use of algorithmic technologies to predict crime by individuals, Prox told researchers. Police in cities like Chicago and Los Angeles have used data including criminal record histories, social media, friendships and movements to predict future crimes by individuals.

Khoo said the Vancouver department was an outlier in terms of its evident care and openness in implementing algorithmic programs. But the report noted the department, like forces across Canada, fell short in full and timely transparency about predictive programs.

Police forces have failed to provide the public with information about other aspects of technology used in their work. The RCMP, for instance, only revealed details about its social media monitoring Project Wide Awake after an investigation by The Tyee uncovered the program.

The RCMP also refused to say if it was using Clearview AI’s controversial facial recognition software — or denied using it — until a hacker released the software provider’s client list. The public found out that Toronto police were using facial recognition software only after a year.

Khoo also stressed the need for more effective privacy impact assessments and algorithmic impact assessments on these kind of programs. ![]()

Read more: Rights + Justice, Science + Tech

Tyee Commenting Guidelines

Comments that violate guidelines risk being deleted, and violations may result in a temporary or permanent user ban. Maintain the spirit of good conversation to stay in the discussion.

*Please note The Tyee is not a forum for spreading misinformation about COVID-19, denying its existence or minimizing its risk to public health.

Do:

Do not: